🧚CLEARFAIRY

Capturing Creative Workflows through

Decision Structuring, In-Situ Questioning, and Rationale

Inference

Capturing Creative Workflows through

Decision Structuring, In-Situ Questioning, and Rationale

Inference

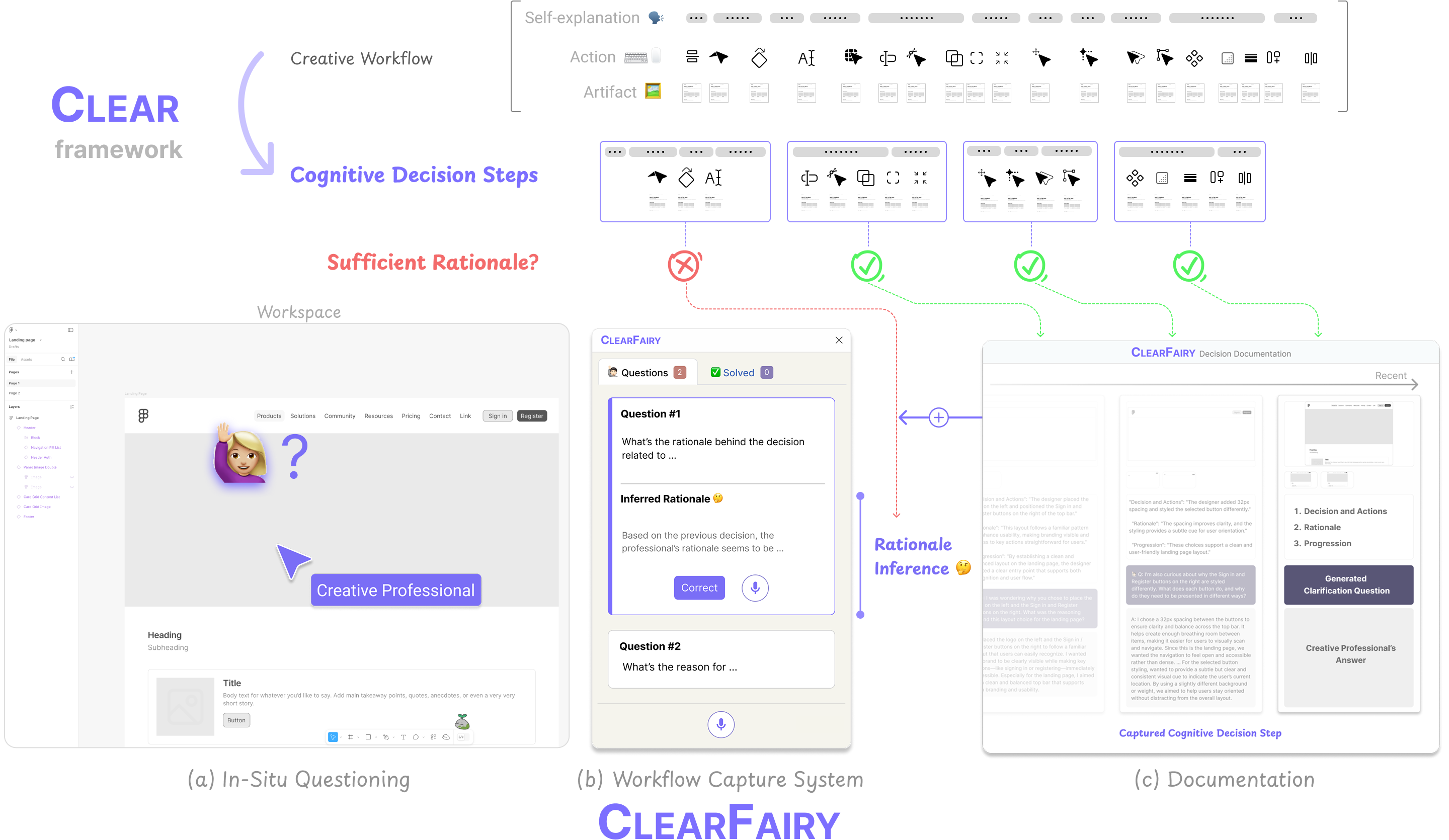

Capturing professionals' decision-making in creative workflows (e.g., UI/UX) is essential for reflection, collaboration, and knowledge sharing, yet existing methods often leave rationales incomplete and implicit decisions hidden. We present CLEARFAIRY, a think-aloud AI assistant for UI design that detects weak explanations, asks lightweight clarifying questions, and infers missing rationales.

Self-explanation during creative workflows faces three critical challenges:

Verbalized self-explanations are often "weak" — they provide insufficient reasoning without causal context, prior knowledge, or comparison of alternatives.

Answering clarifying questions in real time interrupts the creative flow, imposing a cognitive burden that hinders the design process.

Verbal reports alone fail to reveal implicit decisions — those based on expert conventions, aesthetic intuition, or tacit knowledge that feel too natural to explain.

Cognitive Linking of Explanations, Actions, and Results — structuring workflows into Cognitive Decision Steps.

A unit of decision-making defined by dynamic, adaptive, and contextual boundaries — comprising one or more linked explanations, actions, and artifacts.

"This page title lacks visual emphasis — I'll make it bolder and increase the size to clarify hierarchy and identity."

Decisions are structured in real-time as they occur, not retrospectively — preserving the natural flow of thought.

Step boundaries flex to individual differences — expertise level, working style, and task context all shape decision boundaries.

Each decision is fully understood only through the linked combination of explanations, actions, and their resulting artifacts.

A Figma plugin that operationalizes CLEAR through a generative pipeline — capturing, evaluating, and inferring rationales without disrupting the creative workflow.

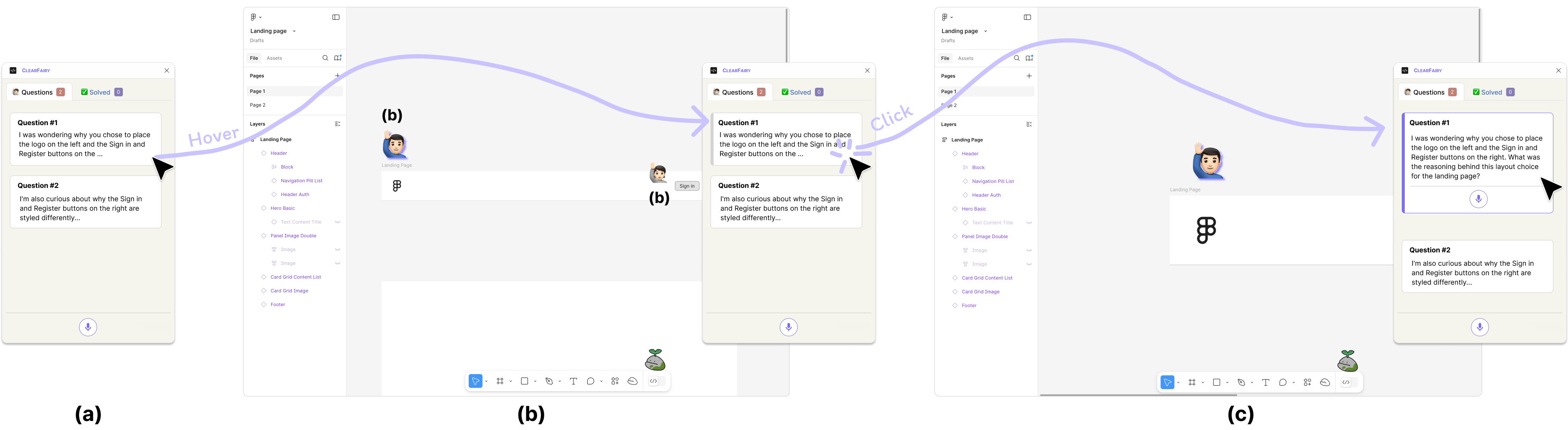

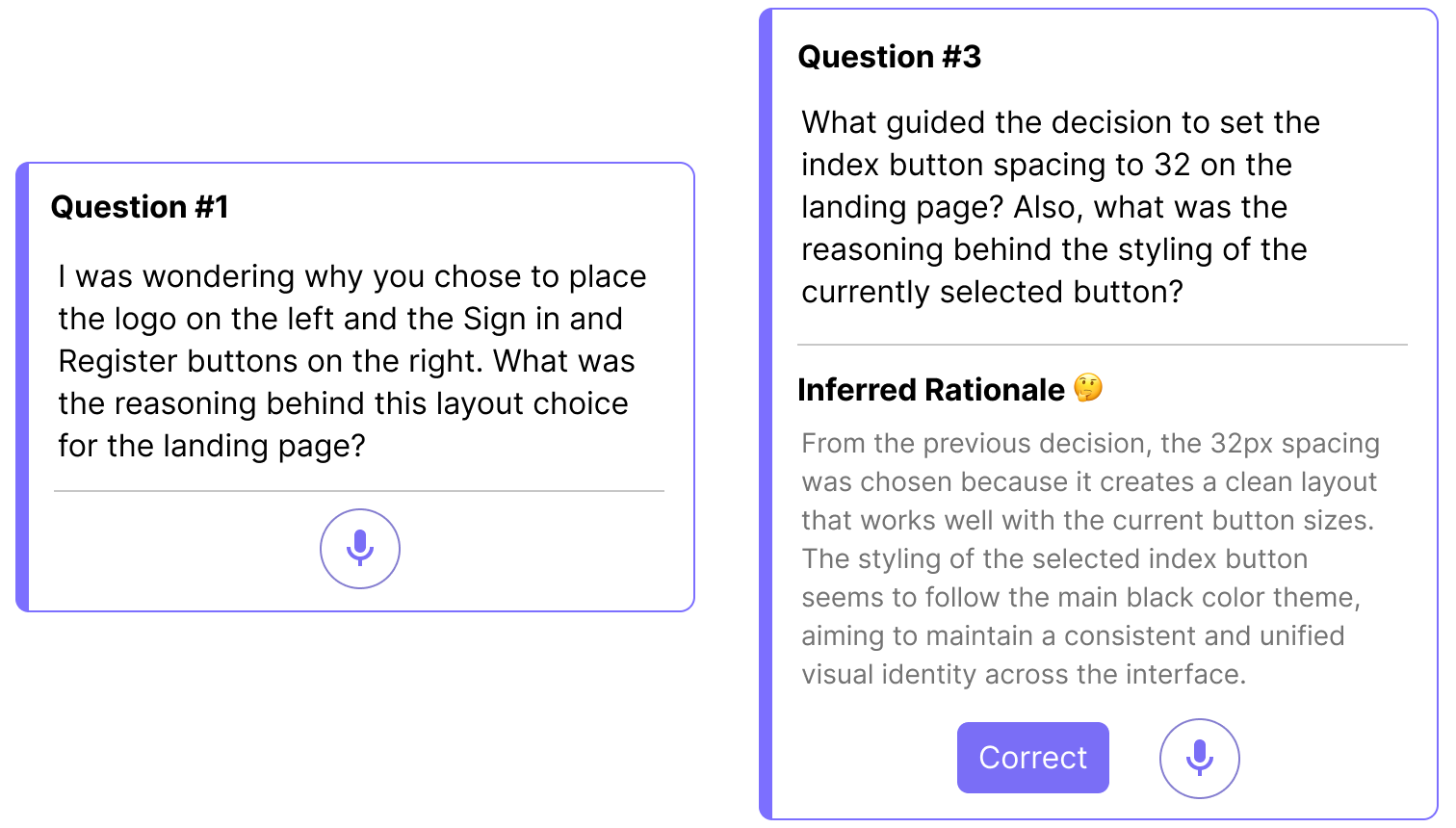

When the system detects a weak or empty explanation, it generates a targeted clarifying question and places it directly on the relevant element in the Figma canvas.

ClearFairy uses a mixed-initiative design: designers explain rationales for their early decisions, and the system infers the rest from those examples, surfacing reasoning experts rarely articulate alone.

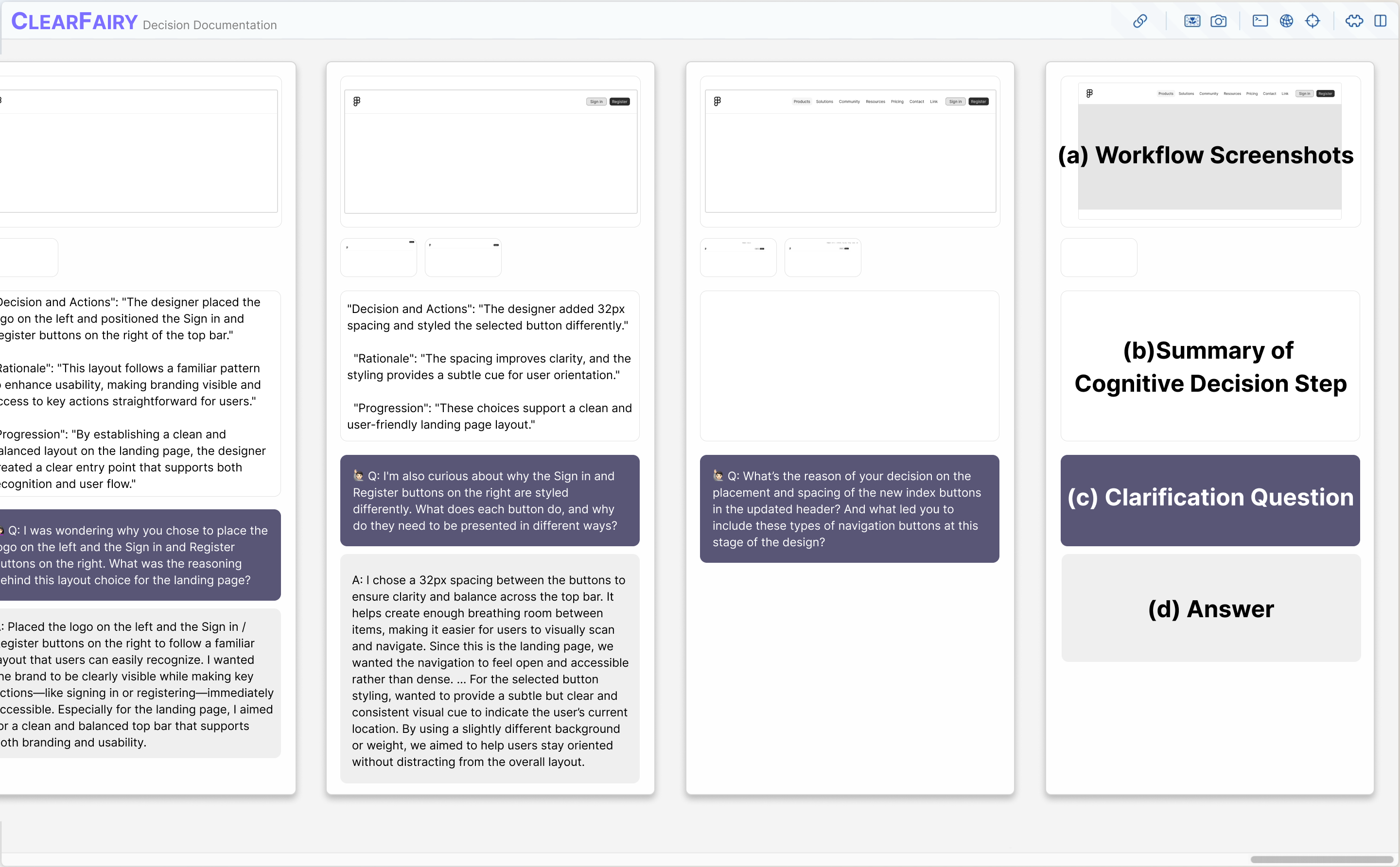

Each captured decision step is organized into a living document containing the decision, rationale, clarification Q&A, and artifact screenshots.

Within-subjects study with 12 UI/UX professionals comparing CLEARFAIRY to a think-aloud baseline.

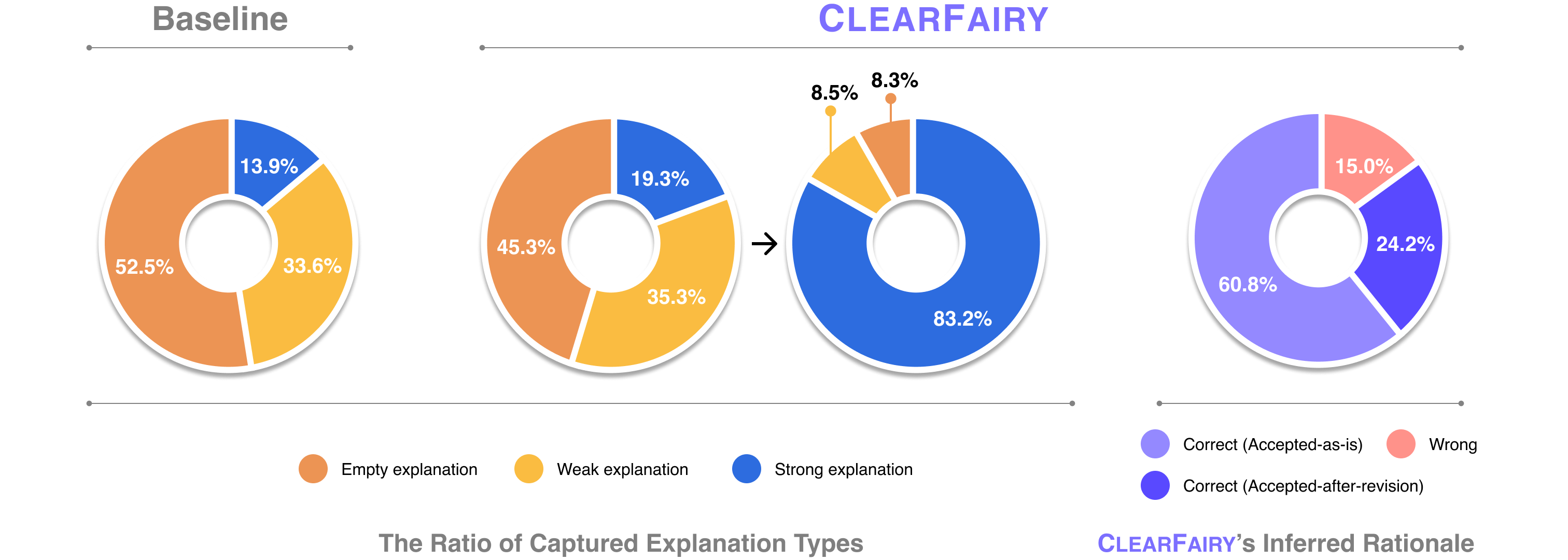

Provides sufficient causal reasoning — includes goals, comparisons to alternatives, prior knowledge, or aesthetic/functional criteria for the decision.

Some context is given but the rationale is incomplete — lacks the "why" behind the decision or relies on vague, surface-level descriptions.

No rationale provided — the decision is made without any verbal justification, leaving the intent entirely implicit.

Quantitative results (N=12). CLEARFAIRY increased strong explanations from 13.9% → 83.2% while reducing empty explanations from 52.5% → 8.3%, with no significant increase in cognitive load (NASA-TLX).

Of CLEARFAIRY's AI-inferred rationales were accepted by participants (as-is or with minor revisions), reflecting strong alignment with their actual reasoning.

The system surfaced implicit decisions based on expert conventions, aesthetic intuition, and personal preferences that designers wouldn't have thought to explain.

No significant difference in NASA-TLX scores — despite capturing significantly more rationale, participants did not feel a higher task load.

As designers provided richer explanations, subsequent AI inferences became more specific and aligned — creating a productive feedback loop.

Beyond knowledge capture, cognitive decision steps open new possibilities for AI agents in creative workflows.

When an LLM was provided with captured cognitive decision steps with rationale, professionals preferred its predicted next actions in 66.7% of cases — vs. only 20% for the no-rationale condition.

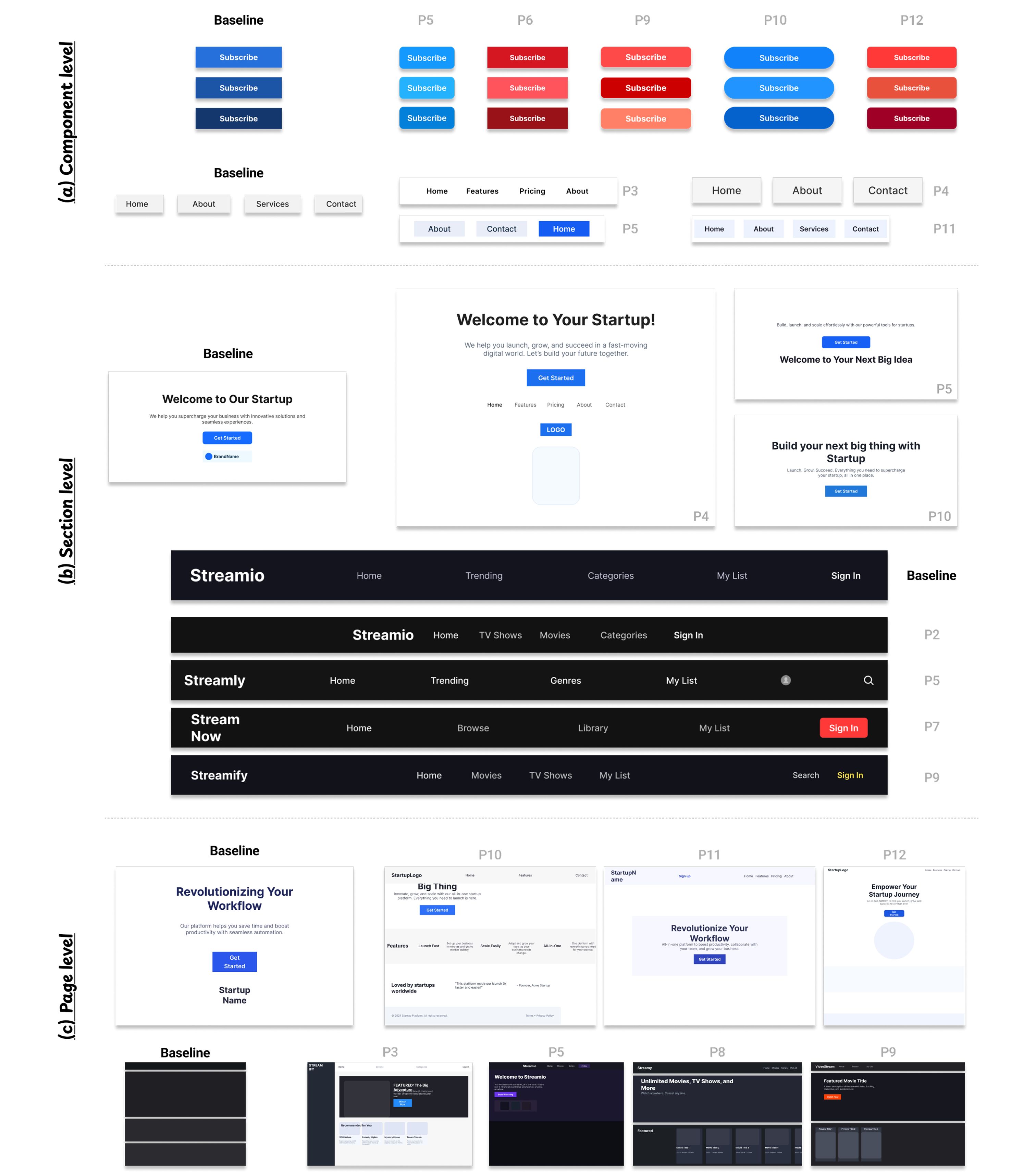

A ReAct-based Figma design agent, when given style guidelines extracted from decision steps, generated artifacts that were rated as more structured, detailed, and professionally aligned — at component, section, and page levels.

A peek at the kind of cognitive decision steps ClearFairy captures from real creative workflows.

CHI 2026 — ACM Conference on Human Factors in Computing Systems

Read on arXiv →

@inproceedings{son2026clearfairy,

title = {ClearFairy: Capturing Creative Workflows through

Decision Structuring, In-Situ Questioning, and Rationale Inference},

author = {Son, Kihoon and Choi, DaEun and Kim, Tae Soo and

Kim, Young-Ho and Yun, Sangdoo and Kim, Juho},

booktitle = {Proceedings of the 2026 CHI Conference on Human Factors

in Computing Systems},

year = {2026},

publisher = {ACM},

doi = {10.1145/3772318.3791680}

}